Abstract Language Model /Andreas Lutz (DE)

New Animation Art + ARS ELECTRONICA HONORARY MENTION 2025

Abstract Language Model

Artist : Andreas Lutz (DE)

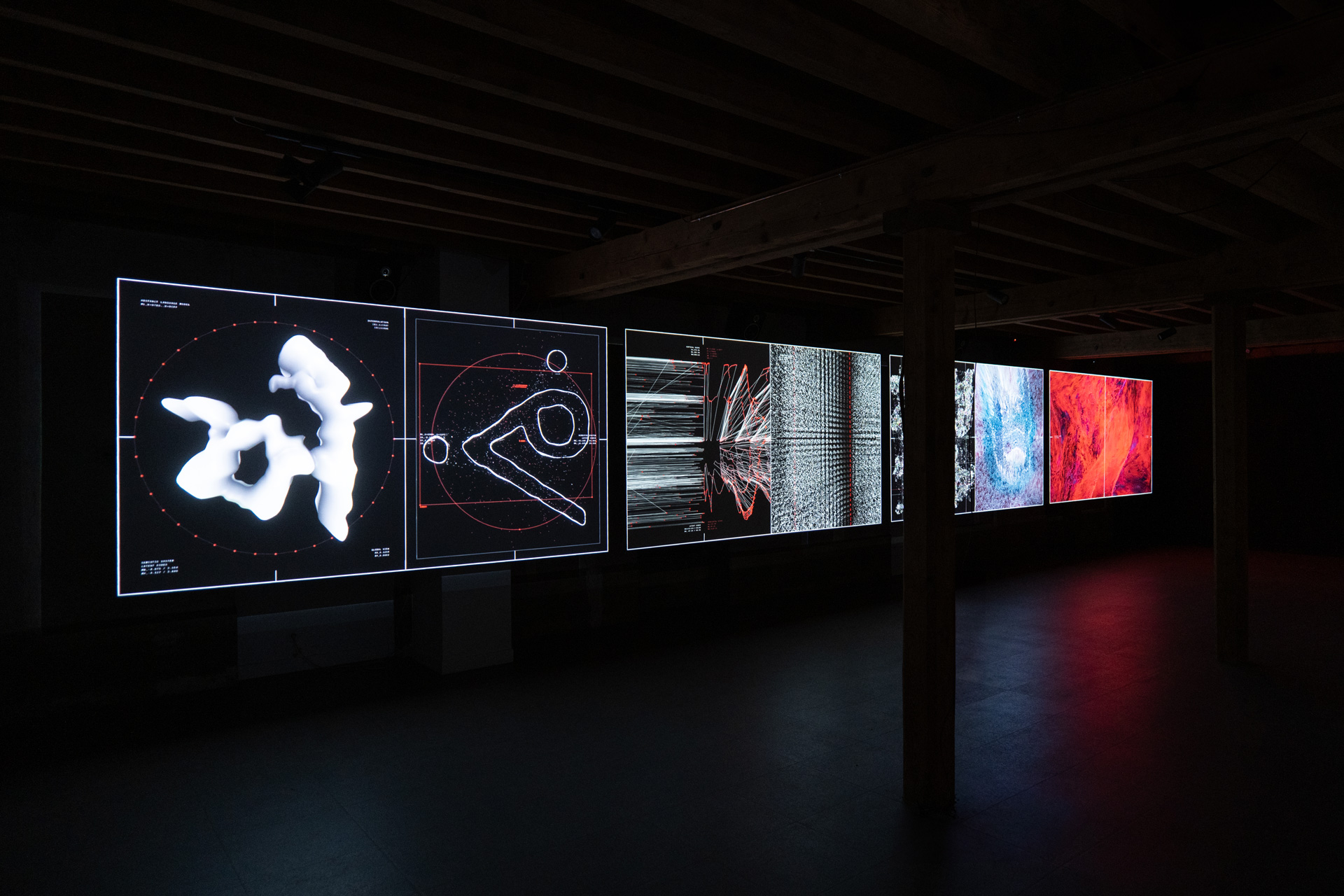

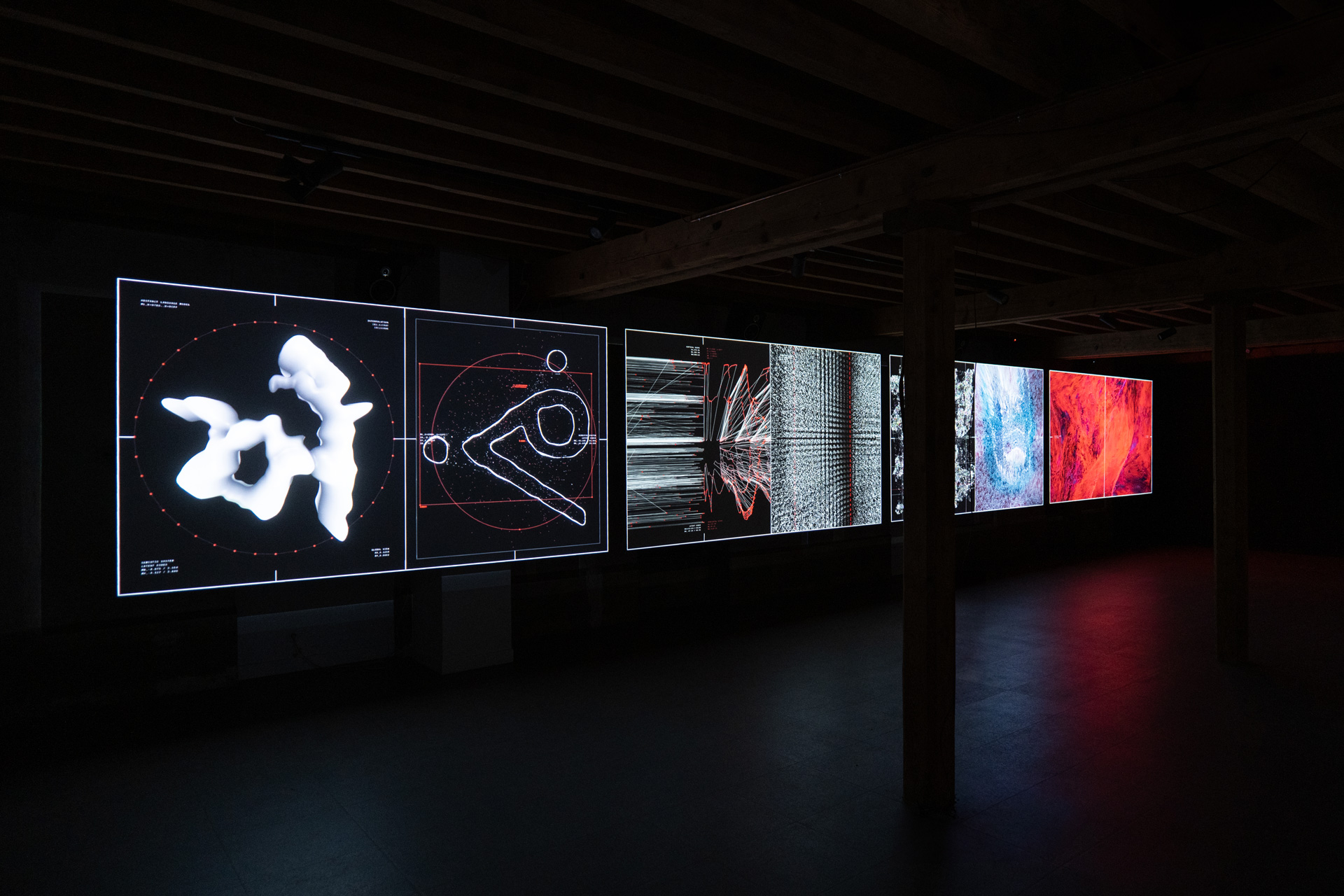

For Abstract Language Model, an artificial neural network was trained with the entire character sets represented in the Unicode Standard (over 65,000 characters in the basic multilingual plane system). The resulting complex data models contain the translation of all available human sign systems as equally representable, machine-created states including latent points, where the most accurate representation of the characters is achieved.

However, between these points interpolation becomes possible, which means that among two previously distinct characters now infinite characters come into existence, which can be seen as the origin of a purely machine created semiotic system. The revealing of these “obscured variants” between the known characters leads to the idea of a transitionless or non-binary universal language, which could be expressed by a self-conscious machine to its human counterpart and vice versa.

The visualizations of these processes are displayed in the 4-channel video installation Abstract Language Model (Sync). Consisting of four synchronized visualizations with seven different states (Extraction, Analysis, Rearrange, Process, Transformation, Learning, and Language), the audio-visual sequence is based on a real-time interpolation through the trained models and depicts the transformation into a trans-human / trans-machine language.

Abstract Language Model (Live) is the audio-visual live performance pendant of the installation. The 45 minutes long performance is presented as a one-channel version with real-time generated visuals and stereo sound.

Having employed also in previous works the conceptual idea of an assumed language model for self-conscious machines and their possible expressions, Abstract Language Model now serves as the semiotic system for current versions of these sculptures and installations.

ref.:

https://archive.aec.at/prix/302810/

https://andreaslutz.com/abstract-language-model-sync/http://ayoungkim.com/wp/3col/delivery-dancers-sphere-2022

報告PPT:https://docs.google.com/presentation/d/1KmdUnATHBwyxdmBJYJDqOXgwX6X1vJN9/edit?usp=sharing&ouid=104134464484600756439&rtpof=true&sd=true