Guanaquerx

Paula Gaetano Adi

ARS Electronica 2025 Prix

References:

artwork website: Guanaquerx

see the artwork statement here: Ars Electronica Archive

author: Paula Gaetano-Adi | RISD

Paula Gaetano Adi

ARS Electronica 2025 Prix

References:

artwork website: Guanaquerx

see the artwork statement here: Ars Electronica Archive

author: Paula Gaetano-Adi | RISD

摘要

本研究提出「物識流」(AoT)願景,主張物件間的資料分享應具語意性、選擇性,並重視使用者隱私,超越現行 IoT 僅強調連線的限制。研究以「研究作為設計」方法,透過多項原型(含 Peekaboo Camera 與設計工具)探索物件如何蒐集、傳遞與詮釋情境資料。最終提出 AoT 設計框架,協助設計師從單一物件擴展到多物件協作資料流,建立更有意義且隱私友善的智慧物件系統。

Keywords:Awareness of Things (AoT), Data-enabled Objects, Privacy-aware Design, Constellation Design, Semantic & Episodic Speculation

論文網址:https://etheses.lib.ntust.edu.tw/thesis/detail/4e354c43f7acb5d39c0d2577bfb0c90f/

作者:陳宜惠

摘要

隨著科技發展,人類不斷地探索宇宙並擴展各種領域,卻因一種理所當然的態度,而造就了單一數位化的外在環境與二元內在精神世界,雖然學術跨域早已方興未艾,但對於創作如何克服領域框架的層層限制仍須深入研究。筆者從當今跨域創作的處境中切入,研究近半世紀許多仍未能全盤被解讀或被觀者所接受的作品,發現其除了具備前瞻思考,更還有深層訊息的揭露。而這些訊息正是從各種現象中輻射出的連續、非連續,甚至是一閃即過的訊號,故筆者認為跨域創作除需要分裂所有已知訊息外,更需具備各種覺察能力,才能處理一切無領域的訊號。亦即讓跨域創作能擁有一個充滿各種訊號的龐大基底,其中包含數位、類比、數位類比混合,以及更多無法以一般感官或科學方法處理的訊號。

在此脈絡下,本論文將探討「後跨域創作」的特質,以及「後跨域創作」如何能既向外不受壓迫地進行創作,也能向內創生,讓基底能量不斷集結與流變。本論文目的即在於論證從跨域到後跨域創作的演化,除了發現問題或表達訊息,也透過層層分裂使更隱晦複雜的混沌宇宙訊號能夠自動湧現。 如此,希冀後跨域創作不只是處在創作的最前線,也是創作最深遠處,與所有領域共振,不斷相連凝聚,是領域與理論的解放。同時,藉由真實潛在訊號的揭發生成,於被傳收與轉化之際,讓任何因後跨域創作所差異衍生的意義都能獲得重視。為了追隨在身邊未曾間斷出現的訊號,此極度耗時的研究不僅需具多學科背景,更需探究微分裂差異,因此,筆者在德勒茲(Gilles Deleuze, 1925-1995)的哲學思想中,擷取其差異重複的微/區分概念作為研究方法,以理論研究與跨域實踐的脈絡,逐步建構跨域創作與各種訊號之間的多重關係。

論文於第一章導論之後直到第六章,即開始綜合分析各種不同但能夠被分裂思考的跨域作品,而無論作品的類別,可以是科技互動、社會實踐、觀念與行動、影音、複合媒體、策展創作等,可謂是一種大型理論策展的架構。從數位訊號穿透到人類感知所能接收的類比訊號,以及類比數位混合訊號,並從中再分裂,來激發與現實化後跨域創作所具備的潛能,以致感通,以及回應更細微的混沌宇宙訊號。本論文研究最終提出後跨域創作可以使用各種創作手法探索訊號,無論極簡或極致,都會是肉身臨界的感官與行動覺察,並合成獨有的訊息與意義,調和因對立所引發的分裂,以深入的關係鏈結代替可能的衝突與困境。也因為後跨域創作能在時空中不斷差異重複,並能以更開放的態度去與遭逢所有未知,在各領域間相互侵越,鬆動固著僵化的知識。更重要的是,後跨域創作讓分裂不會造成對立,而是更多從辯證關係中相互否定進入到微分關係中共同肯定的雙主體,讓分裂的巨大漸變到必須覺察的細微差異,而成就出與時空積分整合的分裂連續體。

關鍵字

後跨域創作、分裂連續體、差異重複、微分關係、混沌宇宙訊號、肉身臨界

Full Paper

https://hdl.handle.net/11296/2edyz2

Presentation

Author – Wu, Ziwei (吳子薇), PhD in Academy of Interdisciplinary Studies, Hong Kong University of Science and Technology

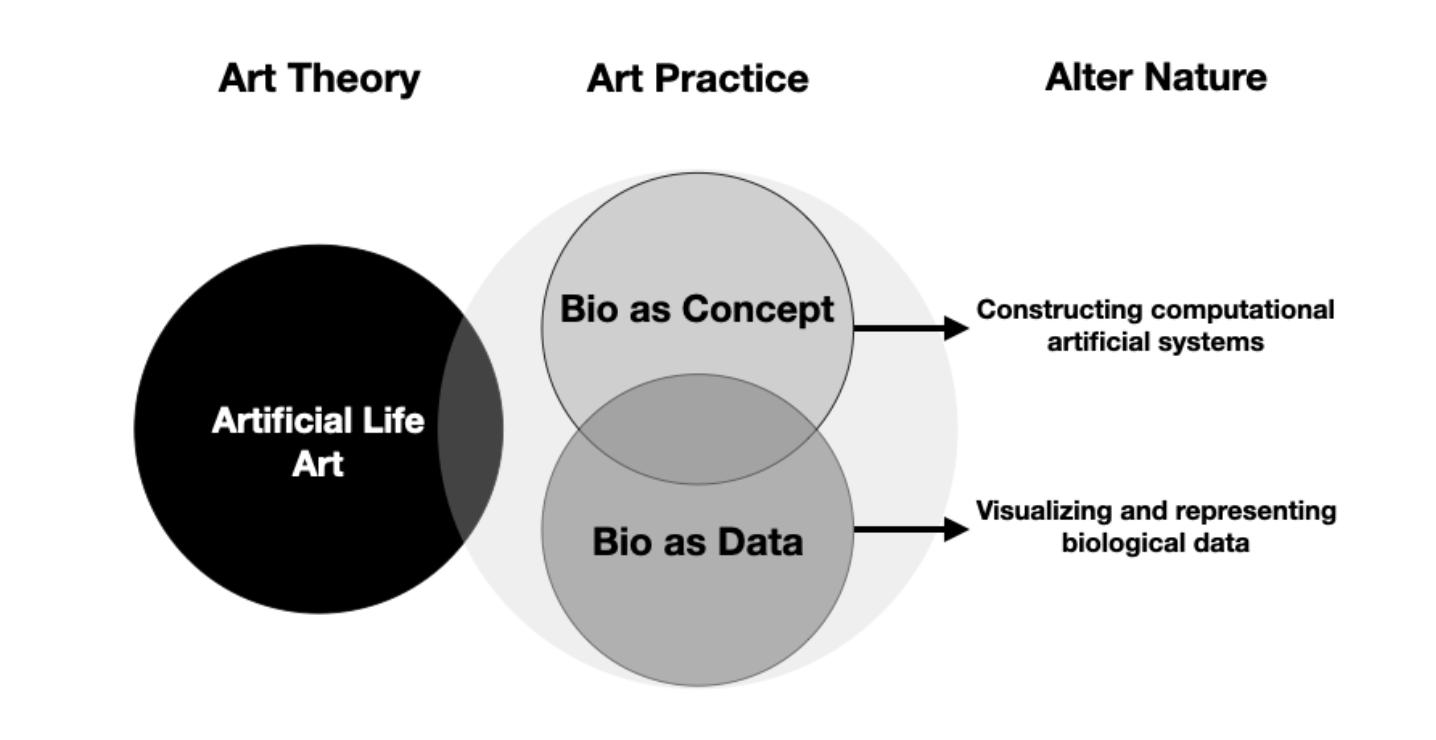

Abstract– Emerging in the late 1980s amid explorations of computing, robotics, and synthetic biology, Artificial Life (ALife) investigates the two conceptions of life and their natural processes—“life-as-we-know-it” and “life-as-it-might-be”—through generative systems that mimic biological phenomena. These conceptions provides the aspects of life to understand the altered and altering nature. While traditional ALife approaches—such as genetic algorithms and agent-based systems—reconstruct life within computational media, contemporary data-driven AI further extends this paradigm. The proliferation of AI-generated content and algorithmic creatures redefines life through data inputs, intensifying the interplay between technology and biology. This convergence necessitates a critical examination of Alter Nature: how human interventions—from artificial ecosystems to bio-digital hybrids—reshape natural processes. To address the two conceptions, this thesis proposes theoretical frameworks to investigate these processes in the artistic context: 1. Bio as Concept: Constructing computational artificial systems with the aim of altering nature. 2. Bio as Data: Visualizing and interpreting biological data through AI and generative tools, examining how human activities have altered nature. Through research and artistic practice, this thesis documents the theoretical frameworks for Alter Nature in computational art.

分神—接枝:以資本主義與技術論析虛擬實境藝術的延異空間

Divergence – Grafting: An Analysis of the Space of Difference in Virtual Reality Art via Capitalism and Technics

摘要:隨著虛擬技術的迅速發展,資本的大規模投入與應用層面的爆發式增長,正推動藝術創作能量的空前躍升。面對新型態的虛擬空間, 現有的資本空間理論是否仍足以加以概括?抑或如當年 David Harvey 在探討「歸零地」時所發現的,既有的空間理論已無法全面涵蓋當前的現象,因此需要重新反思與建構新的空間詮釋框架?基於此,本文試圖開闢一條異於既有論述的思考路徑,在現有空間概念的基礎上推動另一種「空間轉向」。以「混實展演」為研究核心,本文將結合 David Harvey 的資本空間理論與Bernard Stiegler 的技術哲學,深入探討「資本—技術—時空」之間的辯證關係,進一步論析虛擬空間如何在當代重塑空間的生成邏輯與體驗方式。 透過個體對虛擬空間的沉浸式期待與慾望流動,探討「分神」所帶來的斷流狀態。個體遊移於展演空間與虛擬空間的混沌交界,觸發空間自我調適與維穩的機制,進而「接枝」出一個差異性、富含潛能的空間場域。這一連串的分神操演與接枝自癒,轉換推導出另一種感知切換模式:「分神—接枝」共感偏移,進而褶曲生成「延異空間」。由此,「展演空間」、「虛擬空間」與「延異空間」生成共在性,形構出空間的複層性關係。此結構反轉「空間的時間性」與「時間的空間性」之間的關聯,鬆動既有的空間概念,使其如對流生態般持續變異,開啟當代空間的再詮釋性與嶄新轉向。

關鍵字:展演空間、虛擬空間、延異空間、分神—接枝、混實展演、資本空間理論、技術哲學

Full Paper : https://hdl.handle.net/11296/9rz87v

Presentation: PPT

策展典範的轉移?文化平權趨勢下當代藝術的策展思維與實踐

Paradigm Shift of Curatorship? Curatorial Ideas and Practice of Contemporary Art under the Trends of Social Inclusion

國立臺灣藝術大學 藝術管理與文化政策研究所 博士學位論文

指導教授:蔡幸芝 博士

研究生:詹話字

日期:2022/1

Abstract:

本研究探討虛擬實境(Virtual Reality, VR)如何透過「體現他人經驗」影響同理心與助人意願。研究分為兩項實驗,比較不同沉浸層級(頭戴式顯示器 vs. 桌上型電腦)及虛擬身體呈現(可見 vs. 不可見)對使用者心理歷程的影響。研究檢驗了「合理性錯覺(plausibility illusion, Psi)」與「情緒價性(emotional valence)」在沉浸體驗與同理心反應之間的中介作用。結果顯示,VR 雖未直接提升情感或認知同理心,但能透過增強合理性錯覺間接促進情感同理與助人意願;情緒價性愈負面者亦呈現較高同理反應。虛擬身體操弄影響了參與者的情緒與合理性錯覺,部分條件下形成「虛擬身體 → 負面情緒 → 情感同理 → 助人意願」的連續中介效果。研究結果揭示,VR 引發同理心的關鍵不在沉浸度本身,而在於使用者對情境真實性與自我代入的心理機制。

Keywords: 合理性錯覺、助人意願、情感同理、虛擬實境、虛擬體現、認知同理

Reference:

吳岱芸(2022)。《感同「身受」:虛擬實境體現他人經驗對同理心與助人意願的強化效果》。國立政治大學傳播學院碩士論文。

指導教授:林日璇。

DOI / https://hdl.handle.net/11296/9qdwht

Presentation:

PPT

– SIGGRAPH 2024

Author: Florian Christoph Bruggisser, Chris Elvis Leisi, Pascal Lund-Jensen, Martin Fröhlich and Christopher Lloyd Salter

Abstract

This paper describes the artistic context, technical implementation, user study, evaluation and future work around reconFIGURE, a participatory installation exploring the transformation of human bodies through computational systems. The installation involves capturing visitors’ images, transforming these into animated 3D doppelgängers, and projecting them on a large-screen. Unique in its rapid transformation from 2D to 3D, reconFIGURE highlights the aesthetic-social repercussions of transforming whole human body images using machine learning.

Keywords

computation, algorithmic, generative, human interaction, interface,

installation, interaction, machine learning, perception, interaction, body

Reference

https://dl.acm.org/doi/10.1145/3664208

https://blog.zhdk.ch/immersivearts/reconfigure/

Presentation

CineVision: An Interactive Pre-visualization Storyboard System for Director-Cinematographer Collaboration

– Proceedings of the ACM Symposium on User Interface Software and Technology (UIST) 2025, Busan, Republic of Korea

Keywords – storyboard, pre-visualization, AI-assisted filmmaking, director-cinematographer collaboration

Summary – This paper introduces CineVision, an AI-driven interactive platform aimed at enhancing collaboration between film directors and cinematographers during the pre-production phase. Traditional methods rely heavily on hand-drawn storyboards and verbal briefings, which often fall short in visual clarity and iteration speed. CineVision integrates scriptwriting input with real-time visual pre-visualization, offering functionalities such as dynamic lighting control, filmmaker-style visual emulation, and customizable character design. In a lab study involving 24 participants, the system demonstrated reduced task times and improved usability compared to two baseline methods. The findings suggest that by facilitating rapid iteration, clearer visual communication, and aligned creative intent, CineVision has the potential to streamline early film production workflows and foster deeper synergy between directors and cinematographers.

ReCollection: Creating synthetic memories with AI in an interactive art installation

–Proceedings of the ACM on Computer Graphics and Interactive Techniques, Volume 7, Issue 4

Keywords – Experimental Visualization, Intelligent System Design, Interactive Art

Summary – This paper describes the conceptual background, artificial intelligent (AI) system design, and visualization strategies of an interactive AI art experience: ReCollection. In the art installation, the artwork assembles col-lective visual memories based on participants’ language input, blurring the boundaries between remembrance and imagination through intelligent system design and experimental visualization. By providing a conceptual framework for non-linear narratives, constituting symbiotic imaginations for memories, this artwork aims to provide an inclusive art experience that inspires collective memory reproduction by providing an intimate recollection of symbiosis between beings and apparatus. Furthermore, this work provides a future prototype that cultivates empathy for the dementia community by investigating the tensions in the correlations between visual representations and narratives, as well as these between the past, present, and future.

Full Paper : 1029_科技藝術書報討論_李鍵

Authors – Bo Xu, Junzhe Zheng, Jiayuan He, Yuxuan Sun, Hongfei Lin, Liang Zhao, Feng Xia

Keywords – meme understanding, metaphor, multimodal

Summary – Understanding memes is challenging because they contain metaphorical information that requires deep interpretation. Previous studies have added human-annotated metaphors as textual features in machine learning models but often ignored the link between metaphors and corresponding visual elements. This paper proposes MMMC (Multimodal Metaphorical feature for Meme Classification), which jointly models both textual and visual features for better meme understanding. Using a text-conditioned generative adversarial network (GAN), MMMC generates visual features from linguistic cues of metaphorical concepts and integrates them for classification. Experiments on the MET-Meme dataset show that MMMC significantly outperforms existing methods in emotion classification and intention detection.

ACM MM 2024, Multimodal Reasoning & Inference

BleacherBot: AI Agent as a Sports Co-Viewing Partner

Keywords

Co-viewing, AI Agents, Large Language Models (LLMs), Sports

Communication, Human-AI Interaction

Abstract

Co-viewing, traditionally defined as watching content together in the same physical space, enhances emotional connections through shared experiences. With the rise of remote viewing during the COVID-19 pandemic, existing solutions, such as second-screen platforms and rule-based AI companions, struggle to facilitate meaningful social interactions. This study explores the potential of Large Language Models, which offer human-like interactions and personalization. Our formative study with ten participants revealed the importance of managing arousal levels, highlighting the need to balance between high- and low-arousal levels across different viewing contexts. Based on these insights, we developed ‘BleacherBot’, a sports co-viewing agent with distinct interaction styles that vary in arousal levels. Our main study with 27 participants demonstrated that matching users’ preferred arousal levels with the agent’s interaction style significantly enhanced their engagement and overall enjoyment. We propose design guidelines for AI co-viewing agents that consider their role as complements to human social interactions.

Sonja Rozental, Michel van Dartel, Alwin de Rooij

ISEA 2025 Art Long Papers

References:

“How Artists Use AI as a Responsive Material for Art Creation” presented by Rozental, van Dartel and de Rooij – ISEA Symposium Archives

Project Results

– ISEA 2025 Long Paper

Author: Amalia Foka

Abstract

She Works, He Works is an interdisciplinary project that combines feminist visual theory, AI critique, and artistic practice to examine gender representation in AI-generated imagery. By juxtaposing text-to-image outputs of male and female construction workers, it reveals how societal biases manifest in generative AI systems. The study employs critically crafted prompts that blend traditionally gendered traits with unconventional contexts, exposing disparities in representation emphasizing aesthetics and agility for women versus authority and professionalism for men. Grounded in Griselda Pollock’s feminist insights on visual culture, the project positions AI as both a technological and cultural actor, capable of perpetuating stereotypes and enabling critique. As both a study and an artwork, the project underscores the transformative potential of AI to reimagine gender norms and inspire equitable narratives. It highlights the ethical implications of AI in creative industries, calling for transparent practices, diverse datasets, and reflexive engagement with AI’s cultural impact. Through its integration of critical analysis and artistic exploration, She Works, He Works contributes to broader discussions on inclusive AI development and the role of art in fostering societal change. This dual approach showcases the power of AI as a medium for critique and a tool for envisioning more just and inclusive futures.

Keywords

Gender representation, text-to-image models, critical prompt engineering, AI-generated art, bias in AI, feminist visual theory, cultural representation, ethical AI development, societal stereotypes

Reference

Amalia Foka, Computer Science Applications for the Arts, University of Ioannina

Presentation

Being in Virtual Worlds: How Interaction, Environment, and Touch Shape Embodiment in Immersive Experiences

– CHI 2025, ACM SIGCHI Conference on Human Factors in Computing Systems

Keywords – embodiment, presence, interaction, touch, virtual reality

Summary –

This paper reconceptualizes embodiment in virtual reality by arguing that traditional approaches (focusing mainly on body ownership via visual-tactile synchronization) are too narrow. Instead, the authors propose that VR embodiment emerges through the dynamic interplay of interaction, environment, and touch. They build an Interactional Framework of Virtual Embodiment, integrating perspectives from philosophy, psychology, HCI, and VR. Through case comparisons (such as pseudo-haptics, shared avatars, and creative VR installations) and reflective design practice, they derive 12 VR design principles aimed at enhancing presence and meaningful embodied experience in virtual worlds.